User login

Physicians have historically had limited adoption of strategies to improve patient experience and often cite suboptimal data and lack of evidence-driven strategies. 1,2 However, public reporting of hospital-level physician domain Hospital Consumer Assessment of Healthcare Providers and Systems (HCAHPS) experience scores, and more recent linking of payments to performance on patient experience metrics, have been associated with significant increases in physician domain scores for most of the hospitals. 3 Hospitals and healthcare organizations have deployed a broad range of strategies to engage physicians. These include emphasizing the relationship between patient experience and patient compliance, complaints, and malpractice lawsuits; appealing to physicians’ sense of competitiveness by publishing individual provider experience scores; educating physicians on HCAHPS and providing them with regularly updated data; and development of specific techniques for improving patient-physician interaction. 4-8

Studies show that educational curricula on improving etiquette and communication skills for physicians lead to improvement in patient experience, and many such training programs are available to hospitals for a significant cost.9-15 Other studies that have focused on providing timely and individual feedback to physicians using tools other than HCAHPS have shown improvement in experience in some instances. 16,17 However, these strategies are resource intensive, require the presence of an independent observer in each patient room, and may not be practical in many settings. Further, long-term sustainability may be problematic.

Since the goal of any educational intervention targeting physicians is routinizing best practices, and since resource-intensive strategies of continuous assessment and feedback may not be practical, we sought to test the impact of periodic physician self-reporting of their etiquette-based behavior on their patient experience scores.

METHODS

Subjects

Hospitalists from 4 hospitals (2 community and 2 academic) that are part of the same healthcare system were the study subjects. Hospitalists who had at least 15 unique patients responding to the routinely administered Press Ganey experience survey during the baseline period were considered eligible. Eligible hospitalists were invited to enroll in the study if their site director confirmed that the provider was likely to stay with the group for the subsequent 12-month study period.

Randomization, Intervention and Control Group

Hospitalists were randomized to the study arm or control arm (1:1 randomization). Study arm participants received biweekly etiquette behavior (EB) surveys and were asked to report how frequently they performed 7 best-practice bedside etiquette behaviors during the previous 2-week period (Table 1). These behaviors were pre-defined by a consensus group of investigators as being amenable to self-report and commonly considered best practice as described in detail below. Control-arm participants received similarly worded survey on quality improvement behaviors (QIB) that would not be expected to impact patient experience (such as reviewing medications to ensure that antithrombotic prophylaxis was prescribed, Table 1).

Baseline and Study Periods

A 12-month period prior to the enrollment of each hospitalist was considered the baseline period for that individual. Hospitalist eligibility was assessed based on number of unique patients for each hospitalist who responded to the survey during this baseline period. Once enrolled, baseline provider-level patient experience scores were calculated based on the survey responses during this 12-month baseline period. Baseline etiquette behavior performance of the study was calculated from the first survey. After the initial survey, hospitalists received biweekly surveys (EB or QIB) for the 12-month study period for a total of 26 surveys (including the initial survey).

Survey Development, Nature of Survey, Survey Distribution Methods

The EB and QIB physician self-report surveys were developed through an iterative process by the study team. The EB survey included elements from an etiquette-based medicine checklist for hospitalized patients described by Kahn et al. 18 We conducted a review of literature to identify evidence-based practices.19-22 Research team members contributed items on best practices in etiquette-based medicine from their experience. Specifically, behaviors were selected if they met the following 4 criteria: 1) performing the behavior did not lead to significant increase in workload and was relatively easy to incorporate in the work flow; 2) occurrence of the behavior would be easy to note for any outside observer or the providers themselves; 3) the practice was considered to be either an evidence-based or consensus-based best-practice; 4) there was consensus among study team members on including the item. The survey was tested for understandability by hospitalists who were not eligible for the study.

The EB survey contained 7 items related to behaviors that were expected to impact patient experience. The QIB survey contained 4 items related to behaviors that were expected to improve quality (Table 1). The initial survey also included questions about demographic characteristics of the participants.

Survey questionnaires were sent via email every 2 weeks for a period of 12 months. The survey questionnaire became available every other week, between Friday morning and Tuesday midnight, during the study period. Hospitalists received daily email reminders on each of these days with a link to the survey website if they did not complete the survey. They had the opportunity to report that they were not on service in the prior week and opt out of the survey for the specific 2-week period. The survey questions were available online as well as on a mobile device format.

Provider Level Patient Experience Scores

Provider-level patient experience scores were calculated from the physician domain Press Ganey survey items, which included the time that the physician spent with patients, the physician addressed questions/worries, the physician kept patients informed, the friendliness/courtesy of physician, and the skill of physician. Press Ganey responses were scored from 1 to 5 based on the Likert scale responses on the survey such that a response “very good” was scored 5 and a response “very poor” was scored 1. Additionally, physician domain HCAHPS item (doctors treat with courtesy/respect, doctors listen carefully, doctors explain in way patients understand) responses were utilized to calculate another set of HCAHPS provider level experience scores. The responses were scored as 1 for “always” response and “0” for any other response, consistent with CMS dichotomization of these results for public reporting. Weighted scores were calculated for individual hospitalists based on the proportion of days each hospitalist billed for the hospitalization so that experience scores of patients who were cared for by multiple providers were assigned to each provider in proportion to the percent of care delivered.23 Separate composite physician scores were generated from the 5 Press Ganey and for the 3 HCAHPS physician items. Each item was weighted equally, with the maximum possible for Press Ganey composite score of 25 (sum of the maximum possible score of 5 on each of the 5 Press Ganey items) and the HCAHPS possible total was 3 (sum of the maximum possible score of 1 on each of the 3 HCAHPS items).

ANALYSIS AND STATISTICAL METHODS

We analyzed the data to assess for changes in frequency of self-reported behavior over the study period, changes in provider-level patient experience between baseline and study period, and the association between the these 2 outcomes. The self-reported etiquette-based behavior responses were scored as 1 for the lowest response (never) to 4 as the highest (always). With 7 questions, the maximum attainable score was 28. The maximum score was normalized to 100 for ease of interpretation (corresponding to percentage of time etiquette behaviors were employed, by self-report). Similarly, the maximum attainable self-reported QIB-related behavior score on the 4 questions was 16. This was also converted to 0-100 scale for ease of comparison.

Two additional sets of analyses were performed to evaluate changes in patient experience during the study period. First, the mean 12-month provider level patient experience composite score in the baseline period was compared with the 12-month composite score during the 12-month study period for the study group and the control group. These were assessed with and without adjusting for age, sex, race, and U.S. medical school graduate (USMG) status. In the second set of unadjusted and adjusted analyses, changes in biweekly composite scores during the study period were compared between the intervention and the control groups while accounting for correlation between observations from the same physician using mixed linear models. Linear mixed models were used to accommodate correlations among multiple observations made on the same physician by including random effects within each regression model. Furthermore, these models allowed us to account for unbalanced design in our data when not all physicians had an equal number of observations and data elements were collected asynchronously.24 Analyses were performed in R version 3.2.2 (The R Project for Statistical Computing, Vienna, Austria); linear mixed models were performed using the ‘nlme’ package.25

We hypothesized that self-reporting on biweekly surveys would result in increases in the frequency of the reported behavior in each arm. We also hypothesized that, because of biweekly reflection and self-reporting on etiquette-based bedside behavior, patient experience scores would increase in the study arm.

RESULTS

Of the 80 hospitalists approached to participate in the study, 64 elected to participate (80% participation rate). The mean response rate to the survey was 57.4% for the intervention arm and 85.7% for the control arm. Higher response rates were not associated with improved patient experience scores. Of the respondents, 43.1% were younger than 35 years of age, 51.5% practiced in academic settings, and 53.1% were female. There was no statistical difference between hospitalists’ baseline composite experience scores based on gender, age, academic hospitalist status, USMG status, and English as a second language status. Similarly, there were no differences in poststudy composite experience scores based on physician characteristics.

Physicians reported high rates of etiquette-based behavior at baseline (mean score, 83.9+/-3.3), and this showed moderate improvement over the study period (5.6 % [3.9%-7.3%, P < 0.0001]). Similarly, there was a moderate increase in frequency of self-reported behavior in the control arm (6.8% [3.5%-10.1%, P < 0.0001]). Hospitalists reported on 80.7% (77.6%-83.4%) of the biweekly surveys that they “almost always” wrapped up by asking, “Do you have any other questions or concerns” or something similar. In contrast, hospitalists reported on only 27.9% (24.7%-31.3%) of the biweekly survey that they “almost always” sat down in the patient room.

The composite physician domain Press Ganey experience scores were no different for the intervention arm and the control arm during the 12-month baseline period (21.8 vs. 21.7; P = 0.90) and the 12-month intervention period (21.6 vs. 21.5; P = 0.75). Baseline self-reported behaviors were not associated with baseline experience scores. Similarly, there were no differences between the arms on composite physician domain HCAHPS experience scores during baseline (2.1 vs. 2.3; P = 0.13) and intervention periods (2.2 vs. 2.1; P = 0.33).

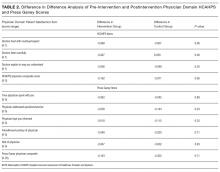

The difference in difference analysis of the baseline and postintervention composite between the intervention arm and the control arm was not statistically significant for Press Ganey composite physician experience scores (-0.163 vs. -0.322; P = 0.71) or HCAHPS composite physician scores (-0.162 vs. -0.071; P = 0.06). The results did not change when controlled for survey response rate (percentage biweekly surveys completed by the hospitalist), age, gender, USMG status, English as a second language status, or percent clinical effort. The difference in difference analysis of the individual Press Ganey and HCAHPS physician domain items that were used to calculate the composite score was also not statistically significant (Table 2).

Changes in self-reported etiquette-based behavior were not associated with any changes in composite Press Ganey and HCAHPS experience score or individual items of the composite experience scores between baseline and intervention period. Similarly, biweekly self-reported etiquette behaviors were not associated with composite and individual item experience scores derived from responses of the patients discharged during the same 2-week reporting period. The intra-class correlation between observations from the same physician was only 0.02%, suggesting that most of the variation in scores was likely due to patient factors and did not result from differences between physicians.

DISCUSSION

This 12-month randomized multicenter study of hospitalists showed that repeated self-reporting of etiquette-based behavior results in modest reported increases in performance of these behaviors. However, there was no associated increase in provider level patient experience scores at the end of the study period when compared to baseline scores of the same physicians or when compared to the scores of the control group. The study demonstrated feasibility of self-reporting of behaviors by physicians with high participation when provided modest incentives.

Educational and feedback strategies used to improve patient experience are very resource intensive. Training sessions provided at some hospitals may take hours, and sustained effects are unproved. The presence of an independent observer in patient rooms to generate feedback for providers is not scalable and sustainable outside of a research study environment.9-11,15,17,26-29 We attempted to use physician repeated self-reporting to reinforce the important and easy to adopt components of etiquette-based behavior to develop a more easily sustainable strategy. This may have failed for several reasons.

When combining “always” and “usually” responses, the physicians in our study reported a high level of etiquette behavior at baseline. If physicians believe that they are performing well at baseline, they would not consider this to be an area in need of improvement. Bigger changes in behavior may have been possible had the physicians rated themselves less favorably at baseline. Inflated or high baseline self-assessment of performance might also have led to limited success of other types of educational interventions had they been employed.

Studies published since the rollout of our study have shown that physicians significantly overestimate how frequently they perform these etiquette behaviors.30,31 It is likely that was the case in our study subjects. This may, at best, indicate that a much higher change in the level of self-reported performance would be needed to result in meaningful actual changes, or worse, may render self-reported etiquette behavior entirely unreliable. Interventions designed to improve etiquette-based behavior might need to provide feedback about performance.

A program that provides education on the importance of etiquette-based behaviors, obtains objective measures of performance of these behaviors, and offers individualized feedback may be more likely to increase the desired behaviors. This is a limitation of our study. However, we aimed to test a method that required limited resources. Additionally, our method for attributing HCAHPS scores to an individual physician, based on weighted scores that were calculated according to the proportion of days each hospitalist billed for the hospitalization, may be inaccurate. It is possible that each interaction does not contribute equally to the overall score. A team-based intervention and experience measurements could overcome this limitation.

CONCLUSION

This randomized trial demonstrated the feasibility of self-assessment of bedside etiquette behaviors by hospitalists but failed to demonstrate a meaningful impact on patient experience through self-report. These findings suggest that more intensive interventions, perhaps involving direct observation, peer-to-peer mentoring, or other techniques may be required to impact significantly physician etiquette behaviors.

Disclosure

Johns Hopkins Hospitalist Scholars Program provided funding support. Dr. Qayyum is a consultant for Sunovion. The other authors have nothing to report.

1. Blumenthal D, Kilo CM. A report card on continuous quality improvement. Milbank Q. 1998;76(4):625-648. PubMed

2. Shortell SM, Bennett CL, Byck GR. Assessing the impact of continuous quality improvement on clinical practice: What it will take to accelerate progress. Milbank Q. 1998;76(4):593-624. PubMed

3. Mann RK, Siddiqui Z, Kurbanova N, Qayyum R. Effect of HCAHPS reporting on patient satisfaction with physician communication. J Hosp Med. 2015;11(2):105-110. PubMed

4. Rivers PA, Glover SH. Health care competition, strategic mission, and patient satisfaction: research model and propositions. J Health Organ Manag. 2008;22(6):627-641. PubMed

5. Kim SS, Kaplowitz S, Johnston MV. The effects of physician empathy on patient satisfaction and compliance. Eval Health Prof. 2004;27(3):237-251. PubMed

6. Stelfox HT, Gandhi TK, Orav EJ, Gustafson ML. The relation of patient satisfaction with complaints against physicians and malpractice lawsuits. Am J Med. 2005;118(10):1126-1133. PubMed

7. Rodriguez HP, Rodday AM, Marshall RE, Nelson KL, Rogers WH, Safran DG. Relation of patients’ experiences with individual physicians to malpractice risk. Int J Qual Health Care. 2008;20(1):5-12. PubMed

8. Cydulka RK, Tamayo-Sarver J, Gage A, Bagnoli D. Association of patient satisfaction with complaints and risk management among emergency physicians. J Emerg Med. 2011;41(4):405-411. PubMed

9. Windover AK, Boissy A, Rice TW, Gilligan T, Velez VJ, Merlino J. The REDE model of healthcare communication: Optimizing relationship as a therapeutic agent. Journal of Patient Experience. 2014;1(1):8-13.

10. Chou CL, Hirschmann K, Fortin AH 6th, Lichstein PR. The impact of a faculty learning community on professional and personal development: the facilitator training program of the American Academy on Communication in Healthcare. Acad Med. 2014;89(7):1051-1056. PubMed

11. Kennedy M, Denise M, Fasolino M, John P, Gullen M, David J. Improving the patient experience through provider communication skills building. Patient Experience Journal. 2014;1(1):56-60.

12. Braverman AM, Kunkel EJ, Katz L, et al. Do I buy it? How AIDET™ training changes residents’ values about patient care. Journal of Patient Experience. 2015;2(1):13-20.

13. Riess H, Kelley JM, Bailey RW, Dunn EJ, Phillips M. Empathy training for resident physicians: a randomized controlled trial of a neuroscience-informed curriculum. J Gen Intern Med. 2012;27(10):1280-1286. PubMed

14. Rothberg MB, Steele JR, Wheeler J, Arora A, Priya A, Lindenauer PK. The relationship between time spent communicating and communication outcomes on a hospital medicine service. J Gen Internl Med. 2012;27(2):185-189. PubMed

15. O’Leary KJ, Cyrus RM. Improving patient satisfaction: timely feedback to specific physicians is essential for success. J Hosp Med. 2015;10(8):555-556. PubMed

16. Indovina K, Keniston A, Reid M, et al. Real‐time patient experience surveys of hospitalized medical patients. J Hosp Med. 2016;10(8):497-502. PubMed

17. Banka G, Edgington S, Kyulo N, et al. Improving patient satisfaction through physician education, feedback, and incentives. J Hosp Med. 2015;10(8):497-502. PubMed

18. Kahn MW. Etiquette-based medicine. N Engl J Med. 2008;358(19):1988-1989. PubMed

19. Arora V, Gangireddy S, Mehrotra A, Ginde R, Tormey M, Meltzer D. Ability of hospitalized patients to identify their in-hospital physicians. Arch Intern Med. 2009;169(2):199-201. PubMed

20. Francis JJ, Pankratz VS, Huddleston JM. Patient satisfaction associated with correct identification of physicians’ photographs. Mayo Clin Proc. 2001;76(6):604-608. PubMed

21. Strasser F, Palmer JL, Willey J, et al. Impact of physician sitting versus standing during inpatient oncology consultations: patients’ preference and perception of compassion and duration. A randomized controlled trial. J Pain Symptom Manage. 2005;29(5):489-497. PubMed

22. Dudas RA, Lemerman H, Barone M, Serwint JR. PHACES (Photographs of Academic Clinicians and Their Educational Status): a tool to improve delivery of family-centered care. Acad Pediatr. 2010;10(2):138-145. PubMed

23. Herzke C, Michtalik H, Durkin N, et al. A method for attributing patient-level metrics to rotating providers in an inpatient setting. J Hosp Med. Under revision.

24. Holden JE, Kelley K, Agarwal R. Analyzing change: a primer on multilevel models with applications to nephrology. Am J Nephrol. 2008;28(5):792-801. PubMed

25. Pinheiro J, Bates D, DebRoy S, Sarkar D. Linear and nonlinear mixed effects models. R package version. 2007;3:57.

26. Braverman AM, Kunkel EJ, Katz L, et al. Do I buy it? How AIDET™ training changes residents’ values about patient care. Journal of Patient Experience. 2015;2(1):13-20.

27. Riess H, Kelley JM, Bailey RW, Dunn EJ, Phillips M. Empathy training for resident physicians: A randomized controlled trial of a neuroscience-informed curriculum. J Gen Intern Med. 2012;27(10):1280-1286. PubMed

28. Raper SE, Gupta M, Okusanya O, Morris JB. Improving communication skills: A course for academic medical center surgery residents and faculty. J Surg Educ. 2015;72(6):e202-e211. PubMed

29. Indovina K, Keniston A, Reid M, et al. Real‐time patient experience surveys of hospitalized medical patients. J Hosp Med. 2016;11(4):251-256. PubMed

30. Block L, Hutzler L, Habicht R, et al. Do internal medicine interns practice etiquette‐based communication? A critical look at the inpatient encounter. J Hosp Med. 2013;8(11):631-634. PubMed

31. Tackett S, Tad-y D, Rios R, Kisuule F, Wright S. Appraising the practice of etiquette-based medicine in the inpatient setting. J Gen Intern Med. 2013;28(7):908-913. PubMed

Physicians have historically had limited adoption of strategies to improve patient experience and often cite suboptimal data and lack of evidence-driven strategies. 1,2 However, public reporting of hospital-level physician domain Hospital Consumer Assessment of Healthcare Providers and Systems (HCAHPS) experience scores, and more recent linking of payments to performance on patient experience metrics, have been associated with significant increases in physician domain scores for most of the hospitals. 3 Hospitals and healthcare organizations have deployed a broad range of strategies to engage physicians. These include emphasizing the relationship between patient experience and patient compliance, complaints, and malpractice lawsuits; appealing to physicians’ sense of competitiveness by publishing individual provider experience scores; educating physicians on HCAHPS and providing them with regularly updated data; and development of specific techniques for improving patient-physician interaction. 4-8

Studies show that educational curricula on improving etiquette and communication skills for physicians lead to improvement in patient experience, and many such training programs are available to hospitals for a significant cost.9-15 Other studies that have focused on providing timely and individual feedback to physicians using tools other than HCAHPS have shown improvement in experience in some instances. 16,17 However, these strategies are resource intensive, require the presence of an independent observer in each patient room, and may not be practical in many settings. Further, long-term sustainability may be problematic.

Since the goal of any educational intervention targeting physicians is routinizing best practices, and since resource-intensive strategies of continuous assessment and feedback may not be practical, we sought to test the impact of periodic physician self-reporting of their etiquette-based behavior on their patient experience scores.

METHODS

Subjects

Hospitalists from 4 hospitals (2 community and 2 academic) that are part of the same healthcare system were the study subjects. Hospitalists who had at least 15 unique patients responding to the routinely administered Press Ganey experience survey during the baseline period were considered eligible. Eligible hospitalists were invited to enroll in the study if their site director confirmed that the provider was likely to stay with the group for the subsequent 12-month study period.

Randomization, Intervention and Control Group

Hospitalists were randomized to the study arm or control arm (1:1 randomization). Study arm participants received biweekly etiquette behavior (EB) surveys and were asked to report how frequently they performed 7 best-practice bedside etiquette behaviors during the previous 2-week period (Table 1). These behaviors were pre-defined by a consensus group of investigators as being amenable to self-report and commonly considered best practice as described in detail below. Control-arm participants received similarly worded survey on quality improvement behaviors (QIB) that would not be expected to impact patient experience (such as reviewing medications to ensure that antithrombotic prophylaxis was prescribed, Table 1).

Baseline and Study Periods

A 12-month period prior to the enrollment of each hospitalist was considered the baseline period for that individual. Hospitalist eligibility was assessed based on number of unique patients for each hospitalist who responded to the survey during this baseline period. Once enrolled, baseline provider-level patient experience scores were calculated based on the survey responses during this 12-month baseline period. Baseline etiquette behavior performance of the study was calculated from the first survey. After the initial survey, hospitalists received biweekly surveys (EB or QIB) for the 12-month study period for a total of 26 surveys (including the initial survey).

Survey Development, Nature of Survey, Survey Distribution Methods

The EB and QIB physician self-report surveys were developed through an iterative process by the study team. The EB survey included elements from an etiquette-based medicine checklist for hospitalized patients described by Kahn et al. 18 We conducted a review of literature to identify evidence-based practices.19-22 Research team members contributed items on best practices in etiquette-based medicine from their experience. Specifically, behaviors were selected if they met the following 4 criteria: 1) performing the behavior did not lead to significant increase in workload and was relatively easy to incorporate in the work flow; 2) occurrence of the behavior would be easy to note for any outside observer or the providers themselves; 3) the practice was considered to be either an evidence-based or consensus-based best-practice; 4) there was consensus among study team members on including the item. The survey was tested for understandability by hospitalists who were not eligible for the study.

The EB survey contained 7 items related to behaviors that were expected to impact patient experience. The QIB survey contained 4 items related to behaviors that were expected to improve quality (Table 1). The initial survey also included questions about demographic characteristics of the participants.

Survey questionnaires were sent via email every 2 weeks for a period of 12 months. The survey questionnaire became available every other week, between Friday morning and Tuesday midnight, during the study period. Hospitalists received daily email reminders on each of these days with a link to the survey website if they did not complete the survey. They had the opportunity to report that they were not on service in the prior week and opt out of the survey for the specific 2-week period. The survey questions were available online as well as on a mobile device format.

Provider Level Patient Experience Scores

Provider-level patient experience scores were calculated from the physician domain Press Ganey survey items, which included the time that the physician spent with patients, the physician addressed questions/worries, the physician kept patients informed, the friendliness/courtesy of physician, and the skill of physician. Press Ganey responses were scored from 1 to 5 based on the Likert scale responses on the survey such that a response “very good” was scored 5 and a response “very poor” was scored 1. Additionally, physician domain HCAHPS item (doctors treat with courtesy/respect, doctors listen carefully, doctors explain in way patients understand) responses were utilized to calculate another set of HCAHPS provider level experience scores. The responses were scored as 1 for “always” response and “0” for any other response, consistent with CMS dichotomization of these results for public reporting. Weighted scores were calculated for individual hospitalists based on the proportion of days each hospitalist billed for the hospitalization so that experience scores of patients who were cared for by multiple providers were assigned to each provider in proportion to the percent of care delivered.23 Separate composite physician scores were generated from the 5 Press Ganey and for the 3 HCAHPS physician items. Each item was weighted equally, with the maximum possible for Press Ganey composite score of 25 (sum of the maximum possible score of 5 on each of the 5 Press Ganey items) and the HCAHPS possible total was 3 (sum of the maximum possible score of 1 on each of the 3 HCAHPS items).

ANALYSIS AND STATISTICAL METHODS

We analyzed the data to assess for changes in frequency of self-reported behavior over the study period, changes in provider-level patient experience between baseline and study period, and the association between the these 2 outcomes. The self-reported etiquette-based behavior responses were scored as 1 for the lowest response (never) to 4 as the highest (always). With 7 questions, the maximum attainable score was 28. The maximum score was normalized to 100 for ease of interpretation (corresponding to percentage of time etiquette behaviors were employed, by self-report). Similarly, the maximum attainable self-reported QIB-related behavior score on the 4 questions was 16. This was also converted to 0-100 scale for ease of comparison.

Two additional sets of analyses were performed to evaluate changes in patient experience during the study period. First, the mean 12-month provider level patient experience composite score in the baseline period was compared with the 12-month composite score during the 12-month study period for the study group and the control group. These were assessed with and without adjusting for age, sex, race, and U.S. medical school graduate (USMG) status. In the second set of unadjusted and adjusted analyses, changes in biweekly composite scores during the study period were compared between the intervention and the control groups while accounting for correlation between observations from the same physician using mixed linear models. Linear mixed models were used to accommodate correlations among multiple observations made on the same physician by including random effects within each regression model. Furthermore, these models allowed us to account for unbalanced design in our data when not all physicians had an equal number of observations and data elements were collected asynchronously.24 Analyses were performed in R version 3.2.2 (The R Project for Statistical Computing, Vienna, Austria); linear mixed models were performed using the ‘nlme’ package.25

We hypothesized that self-reporting on biweekly surveys would result in increases in the frequency of the reported behavior in each arm. We also hypothesized that, because of biweekly reflection and self-reporting on etiquette-based bedside behavior, patient experience scores would increase in the study arm.

RESULTS

Of the 80 hospitalists approached to participate in the study, 64 elected to participate (80% participation rate). The mean response rate to the survey was 57.4% for the intervention arm and 85.7% for the control arm. Higher response rates were not associated with improved patient experience scores. Of the respondents, 43.1% were younger than 35 years of age, 51.5% practiced in academic settings, and 53.1% were female. There was no statistical difference between hospitalists’ baseline composite experience scores based on gender, age, academic hospitalist status, USMG status, and English as a second language status. Similarly, there were no differences in poststudy composite experience scores based on physician characteristics.

Physicians reported high rates of etiquette-based behavior at baseline (mean score, 83.9+/-3.3), and this showed moderate improvement over the study period (5.6 % [3.9%-7.3%, P < 0.0001]). Similarly, there was a moderate increase in frequency of self-reported behavior in the control arm (6.8% [3.5%-10.1%, P < 0.0001]). Hospitalists reported on 80.7% (77.6%-83.4%) of the biweekly surveys that they “almost always” wrapped up by asking, “Do you have any other questions or concerns” or something similar. In contrast, hospitalists reported on only 27.9% (24.7%-31.3%) of the biweekly survey that they “almost always” sat down in the patient room.

The composite physician domain Press Ganey experience scores were no different for the intervention arm and the control arm during the 12-month baseline period (21.8 vs. 21.7; P = 0.90) and the 12-month intervention period (21.6 vs. 21.5; P = 0.75). Baseline self-reported behaviors were not associated with baseline experience scores. Similarly, there were no differences between the arms on composite physician domain HCAHPS experience scores during baseline (2.1 vs. 2.3; P = 0.13) and intervention periods (2.2 vs. 2.1; P = 0.33).

The difference in difference analysis of the baseline and postintervention composite between the intervention arm and the control arm was not statistically significant for Press Ganey composite physician experience scores (-0.163 vs. -0.322; P = 0.71) or HCAHPS composite physician scores (-0.162 vs. -0.071; P = 0.06). The results did not change when controlled for survey response rate (percentage biweekly surveys completed by the hospitalist), age, gender, USMG status, English as a second language status, or percent clinical effort. The difference in difference analysis of the individual Press Ganey and HCAHPS physician domain items that were used to calculate the composite score was also not statistically significant (Table 2).

Changes in self-reported etiquette-based behavior were not associated with any changes in composite Press Ganey and HCAHPS experience score or individual items of the composite experience scores between baseline and intervention period. Similarly, biweekly self-reported etiquette behaviors were not associated with composite and individual item experience scores derived from responses of the patients discharged during the same 2-week reporting period. The intra-class correlation between observations from the same physician was only 0.02%, suggesting that most of the variation in scores was likely due to patient factors and did not result from differences between physicians.

DISCUSSION

This 12-month randomized multicenter study of hospitalists showed that repeated self-reporting of etiquette-based behavior results in modest reported increases in performance of these behaviors. However, there was no associated increase in provider level patient experience scores at the end of the study period when compared to baseline scores of the same physicians or when compared to the scores of the control group. The study demonstrated feasibility of self-reporting of behaviors by physicians with high participation when provided modest incentives.

Educational and feedback strategies used to improve patient experience are very resource intensive. Training sessions provided at some hospitals may take hours, and sustained effects are unproved. The presence of an independent observer in patient rooms to generate feedback for providers is not scalable and sustainable outside of a research study environment.9-11,15,17,26-29 We attempted to use physician repeated self-reporting to reinforce the important and easy to adopt components of etiquette-based behavior to develop a more easily sustainable strategy. This may have failed for several reasons.

When combining “always” and “usually” responses, the physicians in our study reported a high level of etiquette behavior at baseline. If physicians believe that they are performing well at baseline, they would not consider this to be an area in need of improvement. Bigger changes in behavior may have been possible had the physicians rated themselves less favorably at baseline. Inflated or high baseline self-assessment of performance might also have led to limited success of other types of educational interventions had they been employed.

Studies published since the rollout of our study have shown that physicians significantly overestimate how frequently they perform these etiquette behaviors.30,31 It is likely that was the case in our study subjects. This may, at best, indicate that a much higher change in the level of self-reported performance would be needed to result in meaningful actual changes, or worse, may render self-reported etiquette behavior entirely unreliable. Interventions designed to improve etiquette-based behavior might need to provide feedback about performance.

A program that provides education on the importance of etiquette-based behaviors, obtains objective measures of performance of these behaviors, and offers individualized feedback may be more likely to increase the desired behaviors. This is a limitation of our study. However, we aimed to test a method that required limited resources. Additionally, our method for attributing HCAHPS scores to an individual physician, based on weighted scores that were calculated according to the proportion of days each hospitalist billed for the hospitalization, may be inaccurate. It is possible that each interaction does not contribute equally to the overall score. A team-based intervention and experience measurements could overcome this limitation.

CONCLUSION

This randomized trial demonstrated the feasibility of self-assessment of bedside etiquette behaviors by hospitalists but failed to demonstrate a meaningful impact on patient experience through self-report. These findings suggest that more intensive interventions, perhaps involving direct observation, peer-to-peer mentoring, or other techniques may be required to impact significantly physician etiquette behaviors.

Disclosure

Johns Hopkins Hospitalist Scholars Program provided funding support. Dr. Qayyum is a consultant for Sunovion. The other authors have nothing to report.

Physicians have historically had limited adoption of strategies to improve patient experience and often cite suboptimal data and lack of evidence-driven strategies. 1,2 However, public reporting of hospital-level physician domain Hospital Consumer Assessment of Healthcare Providers and Systems (HCAHPS) experience scores, and more recent linking of payments to performance on patient experience metrics, have been associated with significant increases in physician domain scores for most of the hospitals. 3 Hospitals and healthcare organizations have deployed a broad range of strategies to engage physicians. These include emphasizing the relationship between patient experience and patient compliance, complaints, and malpractice lawsuits; appealing to physicians’ sense of competitiveness by publishing individual provider experience scores; educating physicians on HCAHPS and providing them with regularly updated data; and development of specific techniques for improving patient-physician interaction. 4-8

Studies show that educational curricula on improving etiquette and communication skills for physicians lead to improvement in patient experience, and many such training programs are available to hospitals for a significant cost.9-15 Other studies that have focused on providing timely and individual feedback to physicians using tools other than HCAHPS have shown improvement in experience in some instances. 16,17 However, these strategies are resource intensive, require the presence of an independent observer in each patient room, and may not be practical in many settings. Further, long-term sustainability may be problematic.

Since the goal of any educational intervention targeting physicians is routinizing best practices, and since resource-intensive strategies of continuous assessment and feedback may not be practical, we sought to test the impact of periodic physician self-reporting of their etiquette-based behavior on their patient experience scores.

METHODS

Subjects

Hospitalists from 4 hospitals (2 community and 2 academic) that are part of the same healthcare system were the study subjects. Hospitalists who had at least 15 unique patients responding to the routinely administered Press Ganey experience survey during the baseline period were considered eligible. Eligible hospitalists were invited to enroll in the study if their site director confirmed that the provider was likely to stay with the group for the subsequent 12-month study period.

Randomization, Intervention and Control Group

Hospitalists were randomized to the study arm or control arm (1:1 randomization). Study arm participants received biweekly etiquette behavior (EB) surveys and were asked to report how frequently they performed 7 best-practice bedside etiquette behaviors during the previous 2-week period (Table 1). These behaviors were pre-defined by a consensus group of investigators as being amenable to self-report and commonly considered best practice as described in detail below. Control-arm participants received similarly worded survey on quality improvement behaviors (QIB) that would not be expected to impact patient experience (such as reviewing medications to ensure that antithrombotic prophylaxis was prescribed, Table 1).

Baseline and Study Periods

A 12-month period prior to the enrollment of each hospitalist was considered the baseline period for that individual. Hospitalist eligibility was assessed based on number of unique patients for each hospitalist who responded to the survey during this baseline period. Once enrolled, baseline provider-level patient experience scores were calculated based on the survey responses during this 12-month baseline period. Baseline etiquette behavior performance of the study was calculated from the first survey. After the initial survey, hospitalists received biweekly surveys (EB or QIB) for the 12-month study period for a total of 26 surveys (including the initial survey).

Survey Development, Nature of Survey, Survey Distribution Methods

The EB and QIB physician self-report surveys were developed through an iterative process by the study team. The EB survey included elements from an etiquette-based medicine checklist for hospitalized patients described by Kahn et al. 18 We conducted a review of literature to identify evidence-based practices.19-22 Research team members contributed items on best practices in etiquette-based medicine from their experience. Specifically, behaviors were selected if they met the following 4 criteria: 1) performing the behavior did not lead to significant increase in workload and was relatively easy to incorporate in the work flow; 2) occurrence of the behavior would be easy to note for any outside observer or the providers themselves; 3) the practice was considered to be either an evidence-based or consensus-based best-practice; 4) there was consensus among study team members on including the item. The survey was tested for understandability by hospitalists who were not eligible for the study.

The EB survey contained 7 items related to behaviors that were expected to impact patient experience. The QIB survey contained 4 items related to behaviors that were expected to improve quality (Table 1). The initial survey also included questions about demographic characteristics of the participants.

Survey questionnaires were sent via email every 2 weeks for a period of 12 months. The survey questionnaire became available every other week, between Friday morning and Tuesday midnight, during the study period. Hospitalists received daily email reminders on each of these days with a link to the survey website if they did not complete the survey. They had the opportunity to report that they were not on service in the prior week and opt out of the survey for the specific 2-week period. The survey questions were available online as well as on a mobile device format.

Provider Level Patient Experience Scores

Provider-level patient experience scores were calculated from the physician domain Press Ganey survey items, which included the time that the physician spent with patients, the physician addressed questions/worries, the physician kept patients informed, the friendliness/courtesy of physician, and the skill of physician. Press Ganey responses were scored from 1 to 5 based on the Likert scale responses on the survey such that a response “very good” was scored 5 and a response “very poor” was scored 1. Additionally, physician domain HCAHPS item (doctors treat with courtesy/respect, doctors listen carefully, doctors explain in way patients understand) responses were utilized to calculate another set of HCAHPS provider level experience scores. The responses were scored as 1 for “always” response and “0” for any other response, consistent with CMS dichotomization of these results for public reporting. Weighted scores were calculated for individual hospitalists based on the proportion of days each hospitalist billed for the hospitalization so that experience scores of patients who were cared for by multiple providers were assigned to each provider in proportion to the percent of care delivered.23 Separate composite physician scores were generated from the 5 Press Ganey and for the 3 HCAHPS physician items. Each item was weighted equally, with the maximum possible for Press Ganey composite score of 25 (sum of the maximum possible score of 5 on each of the 5 Press Ganey items) and the HCAHPS possible total was 3 (sum of the maximum possible score of 1 on each of the 3 HCAHPS items).

ANALYSIS AND STATISTICAL METHODS

We analyzed the data to assess for changes in frequency of self-reported behavior over the study period, changes in provider-level patient experience between baseline and study period, and the association between the these 2 outcomes. The self-reported etiquette-based behavior responses were scored as 1 for the lowest response (never) to 4 as the highest (always). With 7 questions, the maximum attainable score was 28. The maximum score was normalized to 100 for ease of interpretation (corresponding to percentage of time etiquette behaviors were employed, by self-report). Similarly, the maximum attainable self-reported QIB-related behavior score on the 4 questions was 16. This was also converted to 0-100 scale for ease of comparison.

Two additional sets of analyses were performed to evaluate changes in patient experience during the study period. First, the mean 12-month provider level patient experience composite score in the baseline period was compared with the 12-month composite score during the 12-month study period for the study group and the control group. These were assessed with and without adjusting for age, sex, race, and U.S. medical school graduate (USMG) status. In the second set of unadjusted and adjusted analyses, changes in biweekly composite scores during the study period were compared between the intervention and the control groups while accounting for correlation between observations from the same physician using mixed linear models. Linear mixed models were used to accommodate correlations among multiple observations made on the same physician by including random effects within each regression model. Furthermore, these models allowed us to account for unbalanced design in our data when not all physicians had an equal number of observations and data elements were collected asynchronously.24 Analyses were performed in R version 3.2.2 (The R Project for Statistical Computing, Vienna, Austria); linear mixed models were performed using the ‘nlme’ package.25

We hypothesized that self-reporting on biweekly surveys would result in increases in the frequency of the reported behavior in each arm. We also hypothesized that, because of biweekly reflection and self-reporting on etiquette-based bedside behavior, patient experience scores would increase in the study arm.

RESULTS

Of the 80 hospitalists approached to participate in the study, 64 elected to participate (80% participation rate). The mean response rate to the survey was 57.4% for the intervention arm and 85.7% for the control arm. Higher response rates were not associated with improved patient experience scores. Of the respondents, 43.1% were younger than 35 years of age, 51.5% practiced in academic settings, and 53.1% were female. There was no statistical difference between hospitalists’ baseline composite experience scores based on gender, age, academic hospitalist status, USMG status, and English as a second language status. Similarly, there were no differences in poststudy composite experience scores based on physician characteristics.

Physicians reported high rates of etiquette-based behavior at baseline (mean score, 83.9+/-3.3), and this showed moderate improvement over the study period (5.6 % [3.9%-7.3%, P < 0.0001]). Similarly, there was a moderate increase in frequency of self-reported behavior in the control arm (6.8% [3.5%-10.1%, P < 0.0001]). Hospitalists reported on 80.7% (77.6%-83.4%) of the biweekly surveys that they “almost always” wrapped up by asking, “Do you have any other questions or concerns” or something similar. In contrast, hospitalists reported on only 27.9% (24.7%-31.3%) of the biweekly survey that they “almost always” sat down in the patient room.

The composite physician domain Press Ganey experience scores were no different for the intervention arm and the control arm during the 12-month baseline period (21.8 vs. 21.7; P = 0.90) and the 12-month intervention period (21.6 vs. 21.5; P = 0.75). Baseline self-reported behaviors were not associated with baseline experience scores. Similarly, there were no differences between the arms on composite physician domain HCAHPS experience scores during baseline (2.1 vs. 2.3; P = 0.13) and intervention periods (2.2 vs. 2.1; P = 0.33).

The difference in difference analysis of the baseline and postintervention composite between the intervention arm and the control arm was not statistically significant for Press Ganey composite physician experience scores (-0.163 vs. -0.322; P = 0.71) or HCAHPS composite physician scores (-0.162 vs. -0.071; P = 0.06). The results did not change when controlled for survey response rate (percentage biweekly surveys completed by the hospitalist), age, gender, USMG status, English as a second language status, or percent clinical effort. The difference in difference analysis of the individual Press Ganey and HCAHPS physician domain items that were used to calculate the composite score was also not statistically significant (Table 2).

Changes in self-reported etiquette-based behavior were not associated with any changes in composite Press Ganey and HCAHPS experience score or individual items of the composite experience scores between baseline and intervention period. Similarly, biweekly self-reported etiquette behaviors were not associated with composite and individual item experience scores derived from responses of the patients discharged during the same 2-week reporting period. The intra-class correlation between observations from the same physician was only 0.02%, suggesting that most of the variation in scores was likely due to patient factors and did not result from differences between physicians.

DISCUSSION

This 12-month randomized multicenter study of hospitalists showed that repeated self-reporting of etiquette-based behavior results in modest reported increases in performance of these behaviors. However, there was no associated increase in provider level patient experience scores at the end of the study period when compared to baseline scores of the same physicians or when compared to the scores of the control group. The study demonstrated feasibility of self-reporting of behaviors by physicians with high participation when provided modest incentives.

Educational and feedback strategies used to improve patient experience are very resource intensive. Training sessions provided at some hospitals may take hours, and sustained effects are unproved. The presence of an independent observer in patient rooms to generate feedback for providers is not scalable and sustainable outside of a research study environment.9-11,15,17,26-29 We attempted to use physician repeated self-reporting to reinforce the important and easy to adopt components of etiquette-based behavior to develop a more easily sustainable strategy. This may have failed for several reasons.

When combining “always” and “usually” responses, the physicians in our study reported a high level of etiquette behavior at baseline. If physicians believe that they are performing well at baseline, they would not consider this to be an area in need of improvement. Bigger changes in behavior may have been possible had the physicians rated themselves less favorably at baseline. Inflated or high baseline self-assessment of performance might also have led to limited success of other types of educational interventions had they been employed.

Studies published since the rollout of our study have shown that physicians significantly overestimate how frequently they perform these etiquette behaviors.30,31 It is likely that was the case in our study subjects. This may, at best, indicate that a much higher change in the level of self-reported performance would be needed to result in meaningful actual changes, or worse, may render self-reported etiquette behavior entirely unreliable. Interventions designed to improve etiquette-based behavior might need to provide feedback about performance.

A program that provides education on the importance of etiquette-based behaviors, obtains objective measures of performance of these behaviors, and offers individualized feedback may be more likely to increase the desired behaviors. This is a limitation of our study. However, we aimed to test a method that required limited resources. Additionally, our method for attributing HCAHPS scores to an individual physician, based on weighted scores that were calculated according to the proportion of days each hospitalist billed for the hospitalization, may be inaccurate. It is possible that each interaction does not contribute equally to the overall score. A team-based intervention and experience measurements could overcome this limitation.

CONCLUSION

This randomized trial demonstrated the feasibility of self-assessment of bedside etiquette behaviors by hospitalists but failed to demonstrate a meaningful impact on patient experience through self-report. These findings suggest that more intensive interventions, perhaps involving direct observation, peer-to-peer mentoring, or other techniques may be required to impact significantly physician etiquette behaviors.

Disclosure

Johns Hopkins Hospitalist Scholars Program provided funding support. Dr. Qayyum is a consultant for Sunovion. The other authors have nothing to report.

1. Blumenthal D, Kilo CM. A report card on continuous quality improvement. Milbank Q. 1998;76(4):625-648. PubMed

2. Shortell SM, Bennett CL, Byck GR. Assessing the impact of continuous quality improvement on clinical practice: What it will take to accelerate progress. Milbank Q. 1998;76(4):593-624. PubMed

3. Mann RK, Siddiqui Z, Kurbanova N, Qayyum R. Effect of HCAHPS reporting on patient satisfaction with physician communication. J Hosp Med. 2015;11(2):105-110. PubMed

4. Rivers PA, Glover SH. Health care competition, strategic mission, and patient satisfaction: research model and propositions. J Health Organ Manag. 2008;22(6):627-641. PubMed

5. Kim SS, Kaplowitz S, Johnston MV. The effects of physician empathy on patient satisfaction and compliance. Eval Health Prof. 2004;27(3):237-251. PubMed

6. Stelfox HT, Gandhi TK, Orav EJ, Gustafson ML. The relation of patient satisfaction with complaints against physicians and malpractice lawsuits. Am J Med. 2005;118(10):1126-1133. PubMed

7. Rodriguez HP, Rodday AM, Marshall RE, Nelson KL, Rogers WH, Safran DG. Relation of patients’ experiences with individual physicians to malpractice risk. Int J Qual Health Care. 2008;20(1):5-12. PubMed

8. Cydulka RK, Tamayo-Sarver J, Gage A, Bagnoli D. Association of patient satisfaction with complaints and risk management among emergency physicians. J Emerg Med. 2011;41(4):405-411. PubMed

9. Windover AK, Boissy A, Rice TW, Gilligan T, Velez VJ, Merlino J. The REDE model of healthcare communication: Optimizing relationship as a therapeutic agent. Journal of Patient Experience. 2014;1(1):8-13.

10. Chou CL, Hirschmann K, Fortin AH 6th, Lichstein PR. The impact of a faculty learning community on professional and personal development: the facilitator training program of the American Academy on Communication in Healthcare. Acad Med. 2014;89(7):1051-1056. PubMed

11. Kennedy M, Denise M, Fasolino M, John P, Gullen M, David J. Improving the patient experience through provider communication skills building. Patient Experience Journal. 2014;1(1):56-60.

12. Braverman AM, Kunkel EJ, Katz L, et al. Do I buy it? How AIDET™ training changes residents’ values about patient care. Journal of Patient Experience. 2015;2(1):13-20.

13. Riess H, Kelley JM, Bailey RW, Dunn EJ, Phillips M. Empathy training for resident physicians: a randomized controlled trial of a neuroscience-informed curriculum. J Gen Intern Med. 2012;27(10):1280-1286. PubMed

14. Rothberg MB, Steele JR, Wheeler J, Arora A, Priya A, Lindenauer PK. The relationship between time spent communicating and communication outcomes on a hospital medicine service. J Gen Internl Med. 2012;27(2):185-189. PubMed

15. O’Leary KJ, Cyrus RM. Improving patient satisfaction: timely feedback to specific physicians is essential for success. J Hosp Med. 2015;10(8):555-556. PubMed

16. Indovina K, Keniston A, Reid M, et al. Real‐time patient experience surveys of hospitalized medical patients. J Hosp Med. 2016;10(8):497-502. PubMed

17. Banka G, Edgington S, Kyulo N, et al. Improving patient satisfaction through physician education, feedback, and incentives. J Hosp Med. 2015;10(8):497-502. PubMed

18. Kahn MW. Etiquette-based medicine. N Engl J Med. 2008;358(19):1988-1989. PubMed

19. Arora V, Gangireddy S, Mehrotra A, Ginde R, Tormey M, Meltzer D. Ability of hospitalized patients to identify their in-hospital physicians. Arch Intern Med. 2009;169(2):199-201. PubMed

20. Francis JJ, Pankratz VS, Huddleston JM. Patient satisfaction associated with correct identification of physicians’ photographs. Mayo Clin Proc. 2001;76(6):604-608. PubMed

21. Strasser F, Palmer JL, Willey J, et al. Impact of physician sitting versus standing during inpatient oncology consultations: patients’ preference and perception of compassion and duration. A randomized controlled trial. J Pain Symptom Manage. 2005;29(5):489-497. PubMed

22. Dudas RA, Lemerman H, Barone M, Serwint JR. PHACES (Photographs of Academic Clinicians and Their Educational Status): a tool to improve delivery of family-centered care. Acad Pediatr. 2010;10(2):138-145. PubMed

23. Herzke C, Michtalik H, Durkin N, et al. A method for attributing patient-level metrics to rotating providers in an inpatient setting. J Hosp Med. Under revision.

24. Holden JE, Kelley K, Agarwal R. Analyzing change: a primer on multilevel models with applications to nephrology. Am J Nephrol. 2008;28(5):792-801. PubMed

25. Pinheiro J, Bates D, DebRoy S, Sarkar D. Linear and nonlinear mixed effects models. R package version. 2007;3:57.

26. Braverman AM, Kunkel EJ, Katz L, et al. Do I buy it? How AIDET™ training changes residents’ values about patient care. Journal of Patient Experience. 2015;2(1):13-20.

27. Riess H, Kelley JM, Bailey RW, Dunn EJ, Phillips M. Empathy training for resident physicians: A randomized controlled trial of a neuroscience-informed curriculum. J Gen Intern Med. 2012;27(10):1280-1286. PubMed

28. Raper SE, Gupta M, Okusanya O, Morris JB. Improving communication skills: A course for academic medical center surgery residents and faculty. J Surg Educ. 2015;72(6):e202-e211. PubMed

29. Indovina K, Keniston A, Reid M, et al. Real‐time patient experience surveys of hospitalized medical patients. J Hosp Med. 2016;11(4):251-256. PubMed

30. Block L, Hutzler L, Habicht R, et al. Do internal medicine interns practice etiquette‐based communication? A critical look at the inpatient encounter. J Hosp Med. 2013;8(11):631-634. PubMed

31. Tackett S, Tad-y D, Rios R, Kisuule F, Wright S. Appraising the practice of etiquette-based medicine in the inpatient setting. J Gen Intern Med. 2013;28(7):908-913. PubMed

1. Blumenthal D, Kilo CM. A report card on continuous quality improvement. Milbank Q. 1998;76(4):625-648. PubMed

2. Shortell SM, Bennett CL, Byck GR. Assessing the impact of continuous quality improvement on clinical practice: What it will take to accelerate progress. Milbank Q. 1998;76(4):593-624. PubMed

3. Mann RK, Siddiqui Z, Kurbanova N, Qayyum R. Effect of HCAHPS reporting on patient satisfaction with physician communication. J Hosp Med. 2015;11(2):105-110. PubMed

4. Rivers PA, Glover SH. Health care competition, strategic mission, and patient satisfaction: research model and propositions. J Health Organ Manag. 2008;22(6):627-641. PubMed

5. Kim SS, Kaplowitz S, Johnston MV. The effects of physician empathy on patient satisfaction and compliance. Eval Health Prof. 2004;27(3):237-251. PubMed

6. Stelfox HT, Gandhi TK, Orav EJ, Gustafson ML. The relation of patient satisfaction with complaints against physicians and malpractice lawsuits. Am J Med. 2005;118(10):1126-1133. PubMed

7. Rodriguez HP, Rodday AM, Marshall RE, Nelson KL, Rogers WH, Safran DG. Relation of patients’ experiences with individual physicians to malpractice risk. Int J Qual Health Care. 2008;20(1):5-12. PubMed

8. Cydulka RK, Tamayo-Sarver J, Gage A, Bagnoli D. Association of patient satisfaction with complaints and risk management among emergency physicians. J Emerg Med. 2011;41(4):405-411. PubMed

9. Windover AK, Boissy A, Rice TW, Gilligan T, Velez VJ, Merlino J. The REDE model of healthcare communication: Optimizing relationship as a therapeutic agent. Journal of Patient Experience. 2014;1(1):8-13.

10. Chou CL, Hirschmann K, Fortin AH 6th, Lichstein PR. The impact of a faculty learning community on professional and personal development: the facilitator training program of the American Academy on Communication in Healthcare. Acad Med. 2014;89(7):1051-1056. PubMed

11. Kennedy M, Denise M, Fasolino M, John P, Gullen M, David J. Improving the patient experience through provider communication skills building. Patient Experience Journal. 2014;1(1):56-60.

12. Braverman AM, Kunkel EJ, Katz L, et al. Do I buy it? How AIDET™ training changes residents’ values about patient care. Journal of Patient Experience. 2015;2(1):13-20.

13. Riess H, Kelley JM, Bailey RW, Dunn EJ, Phillips M. Empathy training for resident physicians: a randomized controlled trial of a neuroscience-informed curriculum. J Gen Intern Med. 2012;27(10):1280-1286. PubMed

14. Rothberg MB, Steele JR, Wheeler J, Arora A, Priya A, Lindenauer PK. The relationship between time spent communicating and communication outcomes on a hospital medicine service. J Gen Internl Med. 2012;27(2):185-189. PubMed

15. O’Leary KJ, Cyrus RM. Improving patient satisfaction: timely feedback to specific physicians is essential for success. J Hosp Med. 2015;10(8):555-556. PubMed

16. Indovina K, Keniston A, Reid M, et al. Real‐time patient experience surveys of hospitalized medical patients. J Hosp Med. 2016;10(8):497-502. PubMed

17. Banka G, Edgington S, Kyulo N, et al. Improving patient satisfaction through physician education, feedback, and incentives. J Hosp Med. 2015;10(8):497-502. PubMed

18. Kahn MW. Etiquette-based medicine. N Engl J Med. 2008;358(19):1988-1989. PubMed

19. Arora V, Gangireddy S, Mehrotra A, Ginde R, Tormey M, Meltzer D. Ability of hospitalized patients to identify their in-hospital physicians. Arch Intern Med. 2009;169(2):199-201. PubMed

20. Francis JJ, Pankratz VS, Huddleston JM. Patient satisfaction associated with correct identification of physicians’ photographs. Mayo Clin Proc. 2001;76(6):604-608. PubMed

21. Strasser F, Palmer JL, Willey J, et al. Impact of physician sitting versus standing during inpatient oncology consultations: patients’ preference and perception of compassion and duration. A randomized controlled trial. J Pain Symptom Manage. 2005;29(5):489-497. PubMed

22. Dudas RA, Lemerman H, Barone M, Serwint JR. PHACES (Photographs of Academic Clinicians and Their Educational Status): a tool to improve delivery of family-centered care. Acad Pediatr. 2010;10(2):138-145. PubMed

23. Herzke C, Michtalik H, Durkin N, et al. A method for attributing patient-level metrics to rotating providers in an inpatient setting. J Hosp Med. Under revision.

24. Holden JE, Kelley K, Agarwal R. Analyzing change: a primer on multilevel models with applications to nephrology. Am J Nephrol. 2008;28(5):792-801. PubMed

25. Pinheiro J, Bates D, DebRoy S, Sarkar D. Linear and nonlinear mixed effects models. R package version. 2007;3:57.

26. Braverman AM, Kunkel EJ, Katz L, et al. Do I buy it? How AIDET™ training changes residents’ values about patient care. Journal of Patient Experience. 2015;2(1):13-20.

27. Riess H, Kelley JM, Bailey RW, Dunn EJ, Phillips M. Empathy training for resident physicians: A randomized controlled trial of a neuroscience-informed curriculum. J Gen Intern Med. 2012;27(10):1280-1286. PubMed

28. Raper SE, Gupta M, Okusanya O, Morris JB. Improving communication skills: A course for academic medical center surgery residents and faculty. J Surg Educ. 2015;72(6):e202-e211. PubMed

29. Indovina K, Keniston A, Reid M, et al. Real‐time patient experience surveys of hospitalized medical patients. J Hosp Med. 2016;11(4):251-256. PubMed

30. Block L, Hutzler L, Habicht R, et al. Do internal medicine interns practice etiquette‐based communication? A critical look at the inpatient encounter. J Hosp Med. 2013;8(11):631-634. PubMed

31. Tackett S, Tad-y D, Rios R, Kisuule F, Wright S. Appraising the practice of etiquette-based medicine in the inpatient setting. J Gen Intern Med. 2013;28(7):908-913. PubMed

© 2017 Society of Hospital Medicine